Works on Dates, Timestamps and valid date/time Strings. Performs a precise subtraction or addition to a Timestamp, without ignoring the time portion. (start, days) Returns the date that is days days after start > df spark.

Returns the number of milliseconds between two Timestamp columns. The current timestamp can be added as a new column to spark Dataframe using the currenttimestamp() function of the sql module in pyspark. Works on Dates, Timestamps and valid date/time Strings.Ĭast to double, subtract, multiply by 1000 and cast back to long Returns the number of seconds between two date/time columns. When used with Timestamps, the time portion is ignored. Create a dataframe with sample date values: Python xxxxxxxxxx >df1 spark. By default, it follows casting rules to if the format is omitted. So I tried the following code: from import lit df.

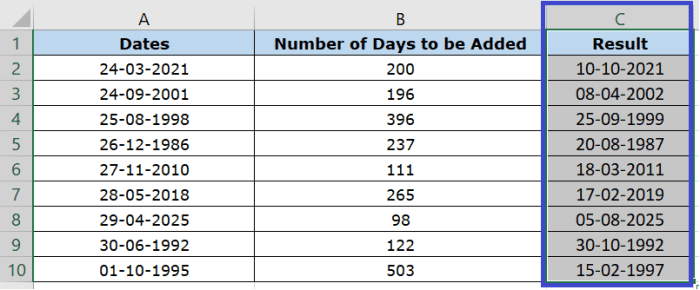

Let’s quickly jump to example and see it one by one. I want to add two new columns, date & calendar week, in my pyspark data frame df. Returns the number of days between two datetime columns. In PySpark, you can do almost all the date operations you can think of using in-built functions. When used with Timestamps, the time portion is ignored Extract the day of the week of a given date/timestamp as integer. See all examples on this jupyter notebook Summary Methodĭate_add(col, num_days) and date_sub(col, num_days)Īdd or subtract a number of days from the given date/timestamp. Note to developers: all of PySpark functions here take string as column names whenever. Get current date spark.sql('select currentdate()').show() add days spark.sql('select dateadd(currentdate(), 2)').show() substract days. Add/subtract from timestamp, don't ignore time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed